RL Environment Engineering Lab

We build the infrastructure AI agents train in.

Containerized replicas of real enterprise software, instrumented with reward signals, failure modes, and full telemetry.

Four components. Each one necessary. Each one designed to the same standard.

Environment engineering

Docker containers cloned from real enterprise software. Realistic data, UI state, and edge cases. Deterministic episodes. Clean boot in under two seconds.

Reward signal design

Multi-signal reward functions scoped to your agent's task. Calibrated scoring across task completion, efficiency, error recovery, and action precision.

Adversarial conditions

Injected failure modes, timeouts, ambiguous form states, and conflicting data. Your agent trains on the conditions that actually break production deployments.

Benchmark as a service

We build the benchmark environment and run evaluations against it. Task suites scoped to your agent's domain.

The environment fidelity problem

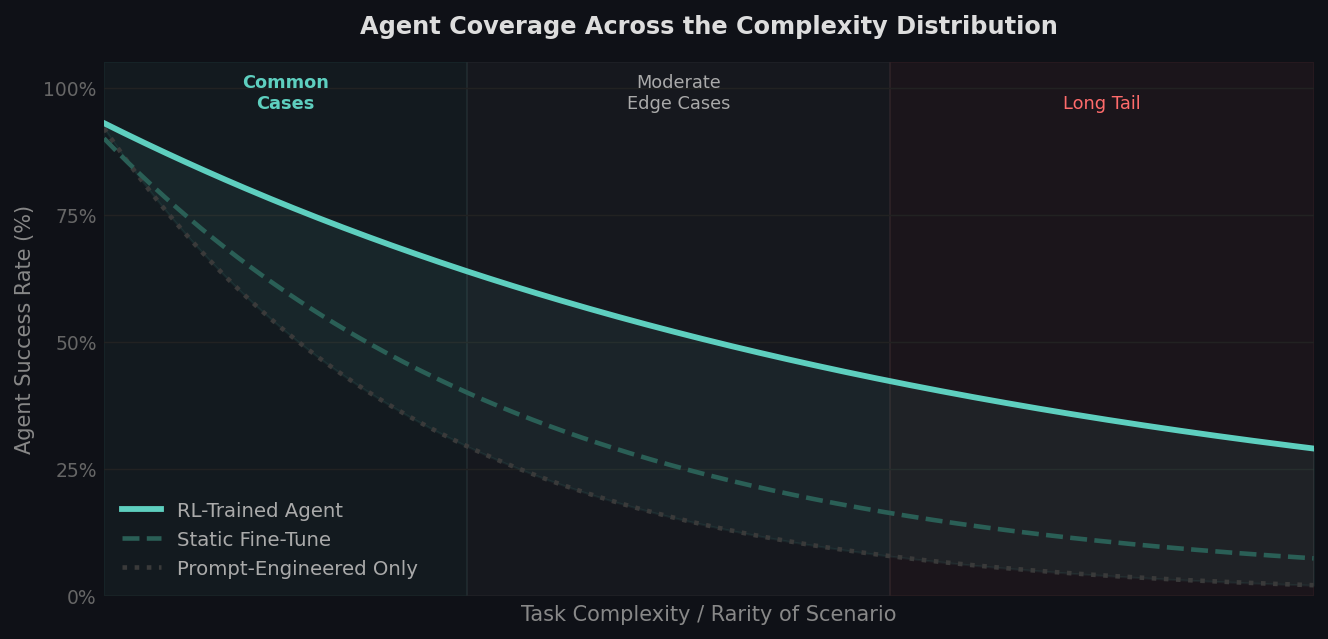

Most production failures begin in the training environment.

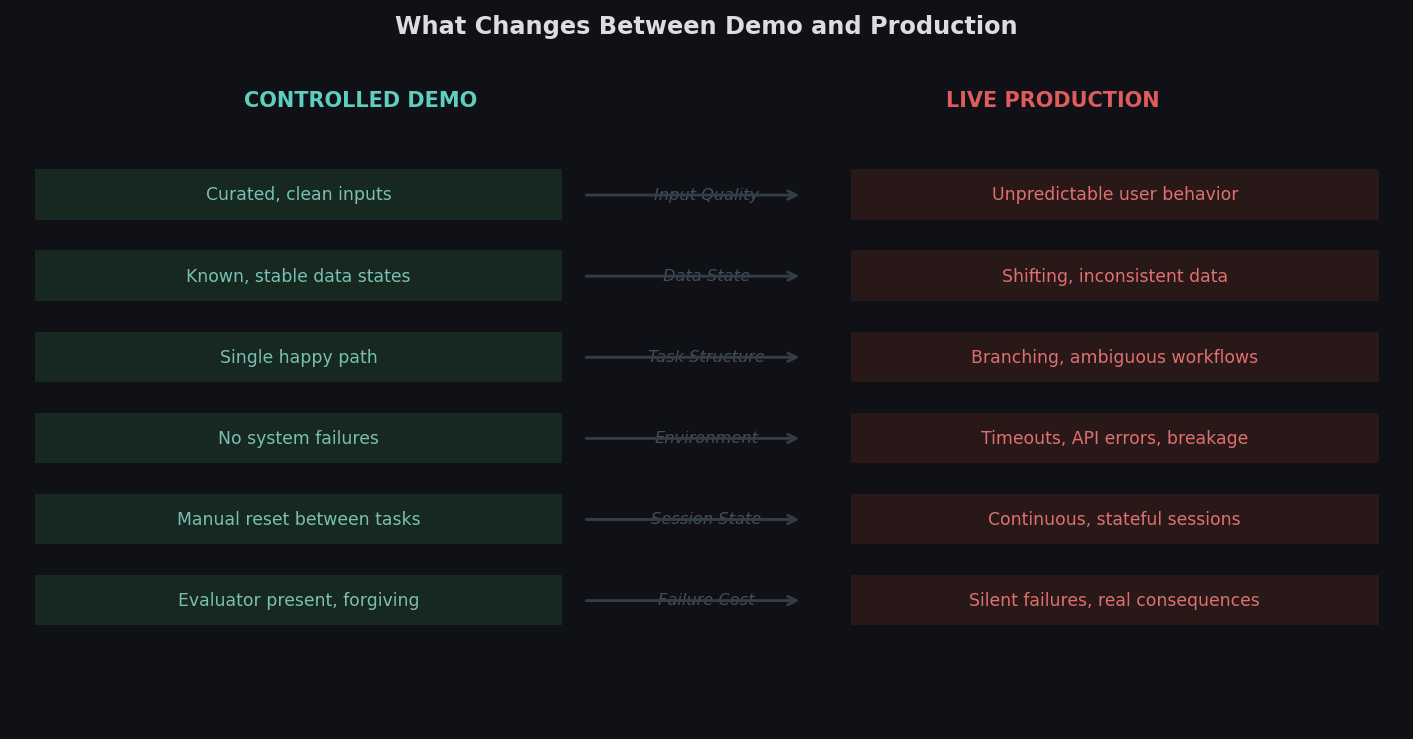

In most AI training settings, the environment is trivial to define. A coding agent has a compiler. A math model has a verifier. But enterprise AI agents operate in software that was never designed for training.

Interfaces change. Data is inconsistent. Workflows break in ways that are hard to predict and harder to reproduce. Edge cases don't appear in demos. They appear in production.

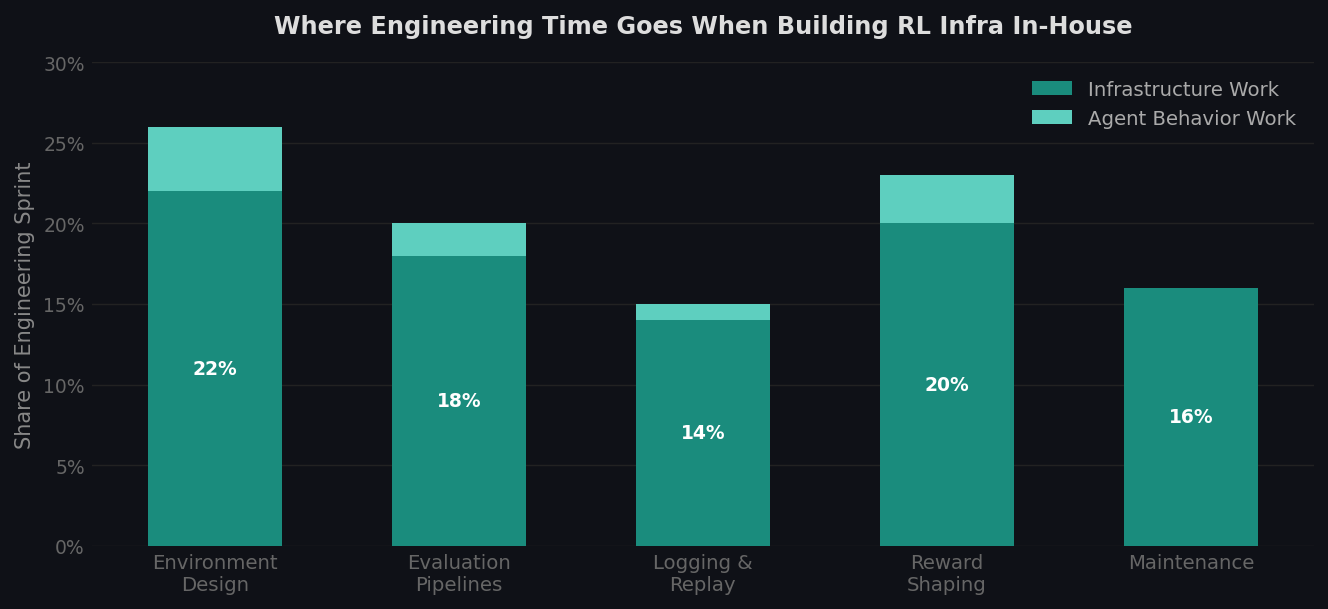

Most teams treat the training environment as infrastructure work. They build a rough approximation and move on. The agent learns from that approximation. Then it meets the real thing.

This is where most production failures begin. Not in the model. In the world the model was trained in.

Use cases.

Customer service agents

Full replicas of CRM dashboards, ticketing systems, and live conversation flows. Ticket resolution, refund processing, user authentication, escalation handling, multi-channel interactions.

Browser and computer use agents

Browser environments with full DOM access, form fields, navigation flows, and multi-tab task contexts. Desktop simulations covering file systems, spreadsheets, email clients, and SaaS tools.

Enterprise workflow agents

Replicas of the tools enterprise agents run in. Ticket creation, pipeline updates, document editing, message drafting, and multi-step workflows across more than one application.

Blog(6)

Most agent failures are training failures.

We take on a limited number of teams each quarter because the work requires depth. The first call is 30 minutes.