Agent Companies Should Stop Building Training Infrastructure

There is a pattern that shows up across almost every agent company at a certain stage. The team ships an agent, hits edge cases, and two months later engineers are maintaining simulation infrastructure full-time.

There is a pattern that shows up across almost every agent company at a certain stage.

The team ships an agent. It performs reasonably well. Then it hits edge cases. The team decides the fix is more training. They start building environments. And two months later, two or three engineers are maintaining simulation infrastructure full-time, and the agent itself has barely changed.

This is not a resource problem. It is a sequencing problem.

Why Teams Build Infra Instinctively

Engineers default to building. When the alternative is buying something that does not perfectly fit, building feels cleaner. You get full control, exact specs, no vendor dependencies.

That logic holds for core product work. It breaks down for training infrastructure.

Training environments are not core product. They exist to serve the agent. The agent is the product. When the environment starts consuming more engineering attention than the agent, the priorities have inverted.

What Building Actually Costs

Teams tend to estimate environment work by the initial build. The real cost is different.

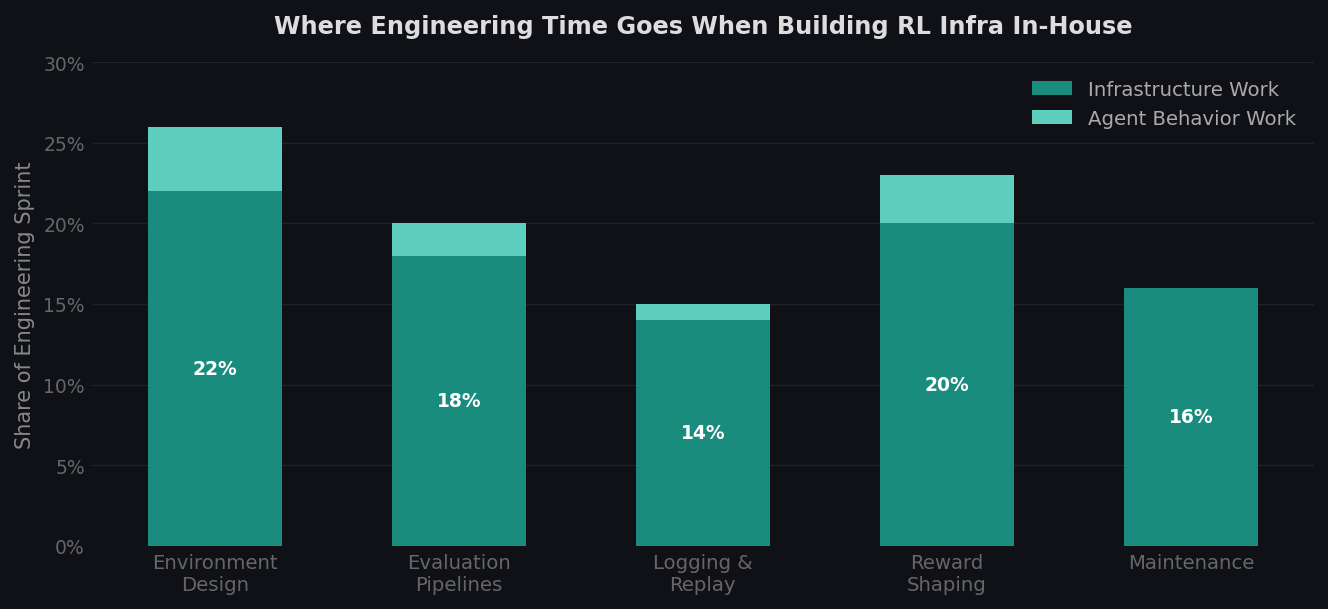

Here is where engineering time actually goes:

-

Environment design — Cloning real software behavior is not straightforward. State representations, action spaces, realistic data: none of it is trivial to get right. The first version usually does not produce useful training signal.

-

Evaluation pipelines — You need a way to score agent behavior. That means defining success criteria, writing scoring logic, and handling edge cases in the evaluation itself.

-

Logging and replay — To understand why an agent failed, you need full episode replay. Building that from scratch takes time most teams underestimate.

-

Reward shaping — Getting reward signals to produce the behavior you want requires iteration. Sparse rewards do not work. Dense rewards overfit. Finding the right shape is its own research problem.

-

Maintenance — The target software changes. API contracts shift. Data schemas update. Someone has to keep the environment in sync with production reality.

None of this is the agent getting better. All of it has to happen before the agent can learn anything.

Engineering time breakdown when building RL infrastructure in-house. Agent behavior work accounts for a small fraction of total sprint time.

Engineering time breakdown when building RL infrastructure in-house. Agent behavior work accounts for a small fraction of total sprint time.

The Leverage Question

The right question is not "can we build this?" It is "what gets us the fastest improvement in agent behavior?"

Model quality is largely fixed by your choice of foundation model. Prompt engineering has a ceiling. The real variable is how quickly you can run training loops and how much signal those loops produce.

A team that runs 50 training iterations in a month will produce a better agent than a team that runs 5. The bottleneck to more iterations is usually the environment, not the agent.

If your engineers are building and maintaining the environment, your iteration speed is bounded by infra work. If the environment is ready and reliable, iteration speed is bounded by how fast you can learn from training runs. Those are very different ceilings.

Build vs. Buy, Framed Correctly

The build-vs-buy question for training environments is not really about cost. It is about where your team's attention compounds.

Every hour an engineer spends on environment tooling is an hour not spent on reward design, behavior analysis, or curriculum structure. Those are the things that directly determine how good the agent gets.

Infra work is also recoverable. If you use an external environment and later decide to bring it in-house, you can. If you spend six months building infra and the agent still does not perform, you cannot get that time back.

What Changes When You Stop Building Infra

Teams that use purpose-built environments instead of building their own consistently describe the same shift: training loops start faster, iteration cycles tighten, and engineers work on agent behavior instead of debugging environment edge cases.

That is not a claim about any particular tool. It is just what happens when the environment is not a distraction.

The agents that improve fastest are usually the ones where the team spent the least time thinking about infrastructure.

theta builds RL training environments for production AI agents. We clone real software into containerized environments with instrumented reward signals, so your team can focus on the agent.